NVIDIA Blackwell Ultra Dominates Seven MLPerf AI Training Benchmarks, GB300 NVL72 Achieves Record 10-Minute Training for Llama 405B

NVIDIA is making waves in the AI industry with its latest triumphs in MLPerf training benchmarks. The company’s cutting-edge Blackwell Ultra-based GB300 NVL72 platform is setting new standards by delivering exceptional AI training performance and dominating the competition.

NVIDIA GB300 NVL72 Triumphs in MLPerf AI Training Benchmarks

NVIDIA continues to lead the charge in AI innovation with its powerful GPUs. The Blackwell-based data center GPUs have consistently demonstrated their capabilities, and the GB300 NVL72 platform is no different. Recently, NVIDIA proudly revealed that its Blackwell Ultra-powered AI GPUs have clinched the top spot in every MLPerf training benchmark. This achievement reaffirms that the GB300 NVL72 rack-scale system remains a prime choice for demanding AI tasks.

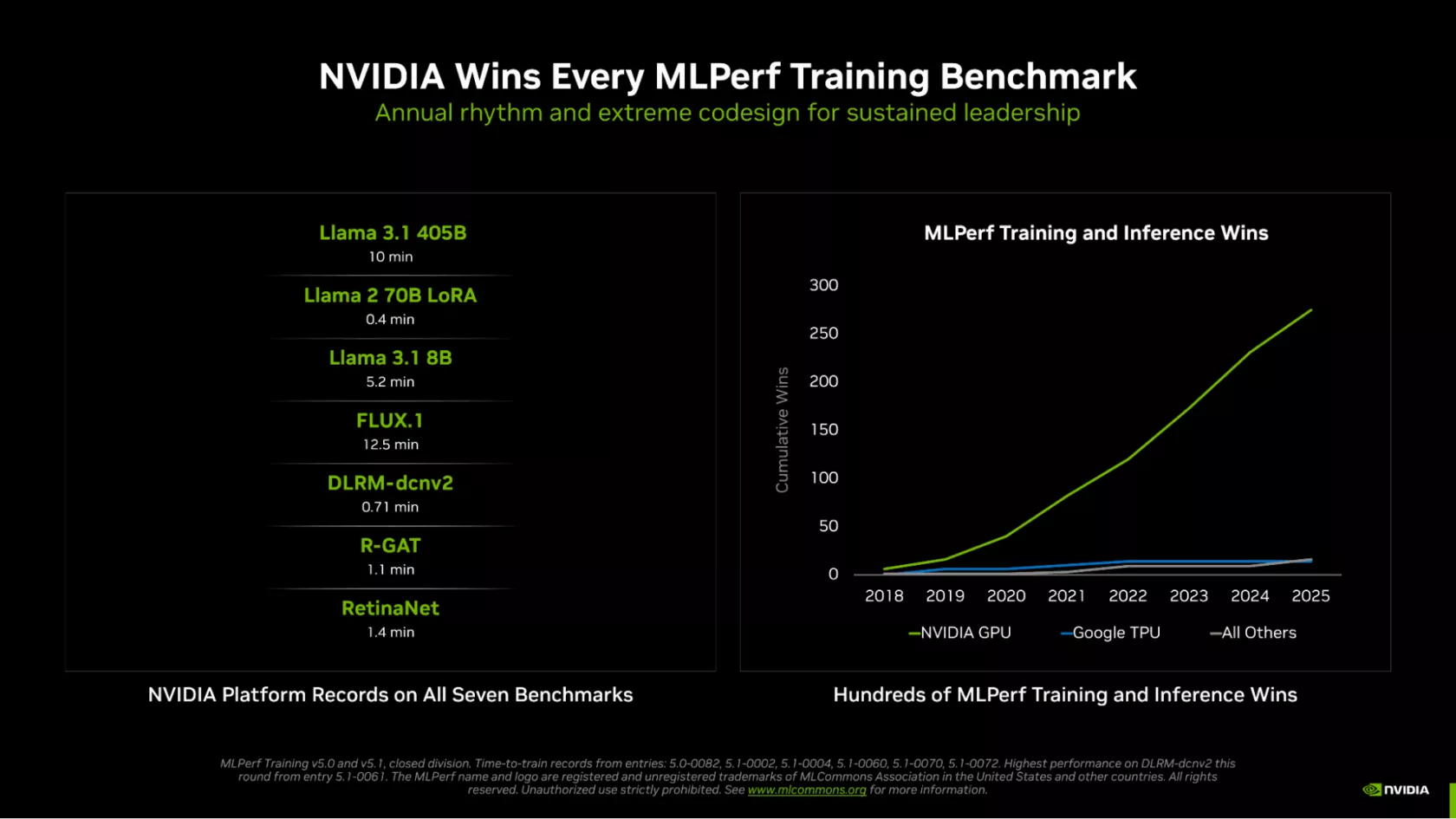

Reports suggest that NVIDIA is the only company to have submitted results for every MLPerf test, further widening the performance gap with competitors. The shared graph highlights that NVIDIA’s GB300 platform boasts “hundreds” of MLPerf Training and Inference wins in 2025 alone.

- Llama 3.1 405B: 10 min

- Llama 2 70B LoRA: 0.4 min

- Llama 3.1 8B: 5.2 min

- FLUX.1: 12.5 min

- DLRM-dcnv2: 0.71 min

- R-GAT: 1.1 min

- RetinaNet: 1.4 min

Stunning Performance Advancements

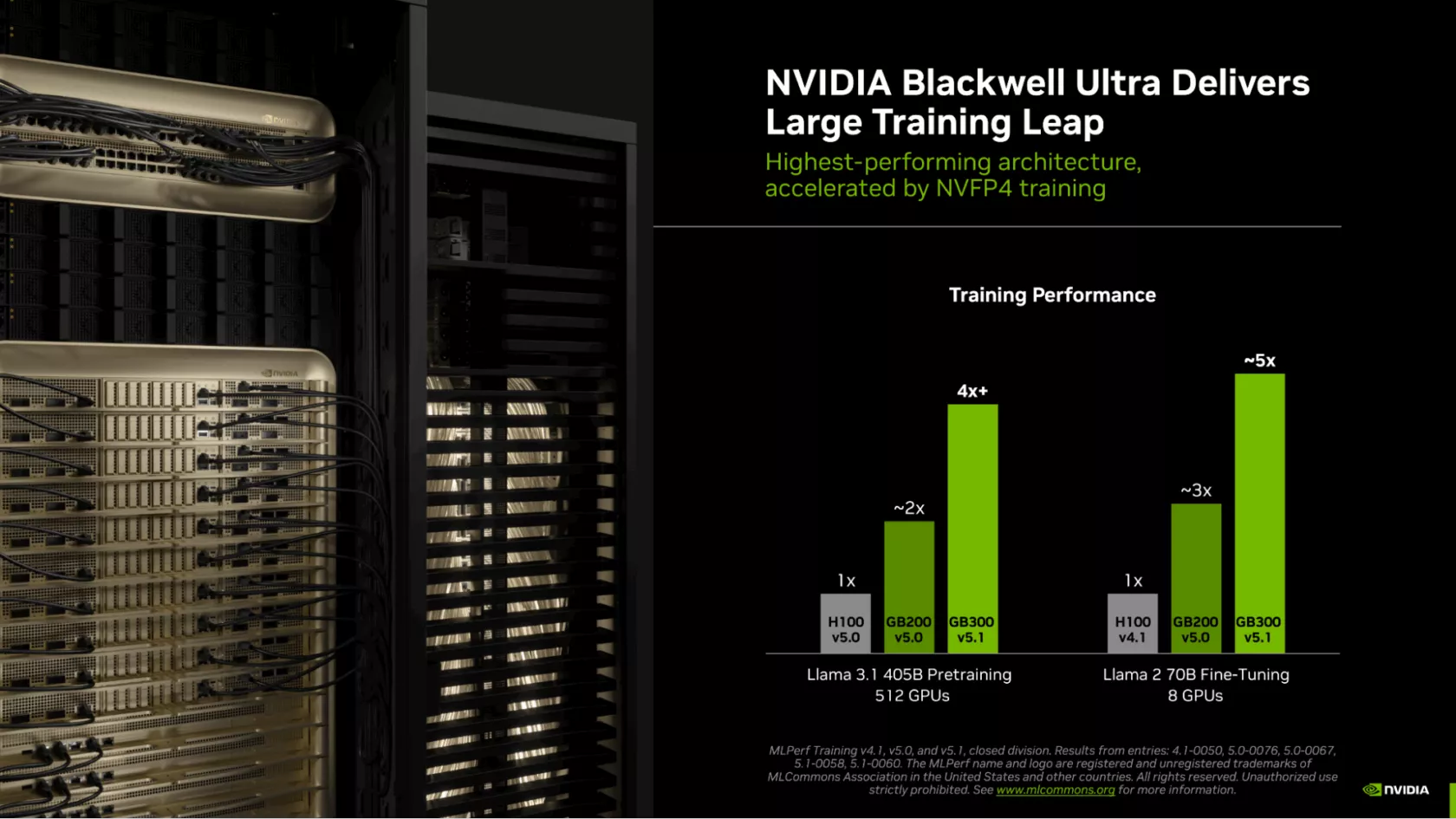

The benchmark results reveal that NVIDIA’s Blackwell Ultra GPUs outperform Hopper-based GPUs by a significant margin. In Llama 3.1 40B pretraining, the GB300 GPUs deliver over 4X the performance of H100 and nearly 2X that of the Blackwell GB200. Moreover, during the Llama 2 70B Fine-Tuning, the GB300 GPUs achieved 5X the performance of H100 using just eight GPUs.

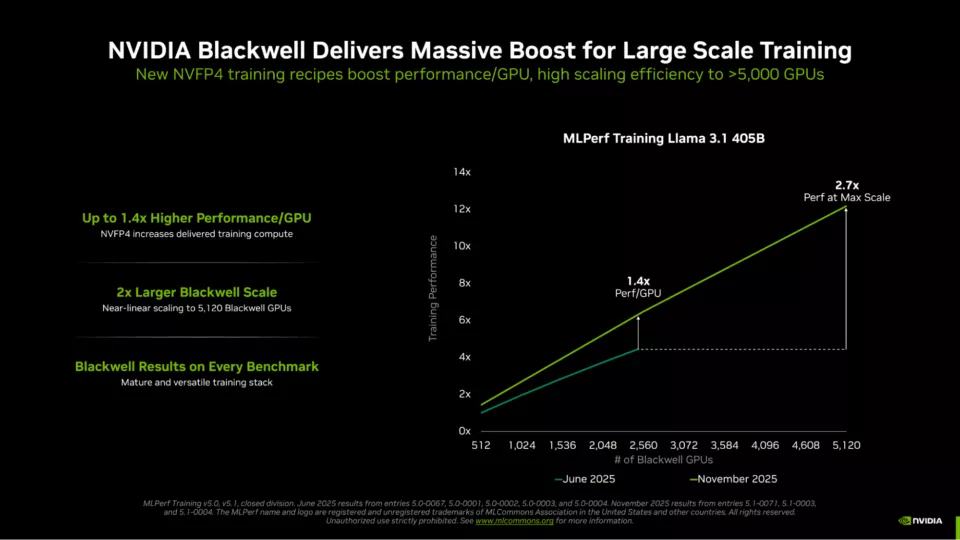

NVIDIA credits its success to the CUDA ecosystem, which offers a significant advantage over competitors. Besides the impressive CUDA software stack, the GB300 NVL72 benefits from Quantum-X800 InfiniBand networking at 800 GB/s and a formidable 279 GB HBM3e memory per GPU. The combined GPU and CPU memory reaches an astonishing 40 TB total capacity, accelerating AI workloads. The adoption of FP4 precision in training further enhances performance, doubling calculation speed compared to FP8.

The latest submissions highlight NVIDIA’s prowess, with 5,120 Blackwell GPUs completing the Llama 3.1 405B parameter training in just 10 minutes. This advancement underscores the company’s commitment to pushing boundaries and delivering unparalleled AI performance.